Delivering Interactive 3D Reference Models and Training to the Point of Need

Virtual Pocket Reference provides spatial understanding to digital instruction

11-05-2020

11-05-2020

Gardner Congdon

Gardner Congdon

TRAINING

TRAINING

I like to pride myself as a respectable DIY mechanic. This presumption was put to the test a few years ago when I found myself under my aging Nissan Pathfinder trying to replace a CV joint.

I knew what I was doing, but the axle was hanging up on something. I couldn’t figure out what the trick was. I checked videos online and that didn’t help. I consulted the owner’s manual, but the flat, 2D, static images didn’t show me the issue. I ended up cutting through a boot – which you shouldn’t need to do – to see the full picture and find exactly what was hanging me up.

I took this mechanical adventure and my earlier career in construction as the inspiration behind SAIC’s Virtual Pocket Reference (VPR). VPR allows us to deliver customized, interactive, 3D models coupled with procedural instruction and reference.

The apps we build with VPR can be installed on a variety of devices, including PCs, mobile devices, and tablets, and can be updated in real time via the cloud. This means our soldiers and warfighters can have a high degree of confidence that the procedures and models they interact with are as up to date as possible.

Clearing hurdles of traditional instruction

VPR overcomes a couple of the major drawbacks I encountered while trying to fix my truck . I was physically blocked from being able to see the problem and could not identify the problem until after I improvised a bit — and definitely not in the best way.

Isometric projections and exploded-view diagrams that visually represent 3D objects, like those found in owner’s manuals, are static, two-dimensional images. While they do a good job of capturing all of the components of an assembly, they can’t show an order of operation or the specific way a component needs to be manipulated in order to assemble or disassemble it. They are also static, so they don’t support other views than the one printed. If that view doesn’t happen to line up with the only view you have, you can be in for a long day.

MORE IMAGINATIVE TRAINING: Rapidly upskilling learners via targeted video training

YouTube videos have gained a lot of traction as a fix for this persistent issue, and they do solve some of the issues that traditional training and reference manuals present. The challenge is that videos are limited to the single view that was recorded. If I want to see around or even through a particular part, or if the video is poorly lit, I’m out of luck. And as with any publicly posted video, how trustworthy is the content? Is this really the best way to fix my vehicle, or am I about to create a mess that only a professional mechanic can clean up?

3D models in SAIC applications built with VPR can be rotated, zoomed, animated, and scrubbed through, providing spatial understanding to the user that diagrams and instructional videos don’t. The interactive models are coupled to validated procedures and instruction through voice and text, which guides users precisely so they understand how to operate and maintain complex systems and achieve mission success.

Mixing instruction with virtual reality

From the inception of VPR, we knew it needed to support virtual and augmented reality interfaces. Built using modern game engine technology, VPR applications can be run on any relevant AR or VR headset, or through smartphones and tablets that support extended reality. Using a headset allows users to operate the application hands free through voice or gesture commands.

We’ve also integrated VPR into SAIC’s broader experiential synthetic training platform, which provides a data-rich training environment where actions taken in the training app have consequences in the synthetic environment. Performance in the synthetic environment steers the training app to focus on gaps in terminal learning objectives.

Leveraging interactivity, 3D models, procedural information, cloud updates, and support for extended reality, all to the point of need, makes VPR a truly next-generation training and reference enabler.

Application to Pilot Training Next

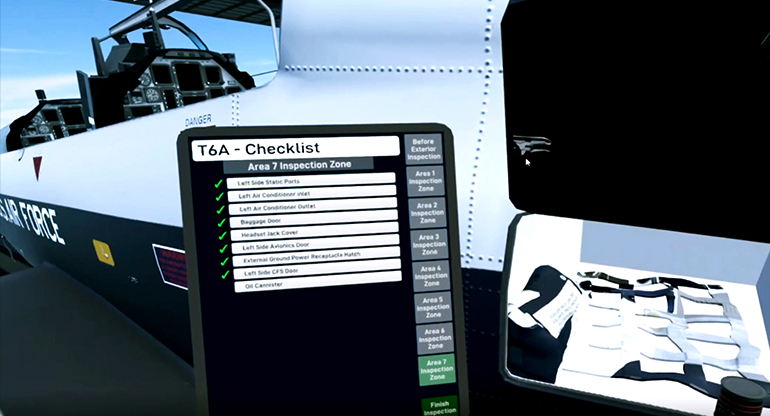

We applied VPR specifically in the Pilot Training Next program for the U.S. Air Force. When undergraduate pilots go through the preflight checklist on the T6 aircraft in VR, VPR powers the procedures and interactive model of the T6.

We also integrated VPR with the experiential synthetic training environment so that if a pilot misses something on their preflight checklist, that error may have consequences later when flying in the simulation. For example, if they don’t check that the brake wear indicators are within spec, their T6 may overshoot the runway when landing. VPR enables users to not only understand how different components function together, but integrates that understanding with the consequences of performance and decisions made in hip pocket training.

Future of VPR

We’ve been thrilled with the success of VPR in the Pilot Training Next program and are working to build on that success. A huge value of VPR lies in its ability to import and use existing models and written procedures, making custom apps built using VPR affordable and very quick to develop.

Applications built using VPR are available as standalone products or may be integrated into SAIC’s experiential synthetic training platform. VPR can also be used in conjunction with SAIC’s curated microlearning platform, in-SITE.

Able to run on a wide variety of devices, VPR enables 21st century hip pocket training. Whether it’s a warfighter learning the preflight procedure for an aircraft or providing me with better understanding of a maintenance procedure, VPR enables the spatial understanding that users need to successfully complete their tasks and missions.